Solutions for Every Industry

expert.ai Platform for Insurance

Reduce risk, improve win rates, and increase capacity for commercial insurance carriers and brokers.

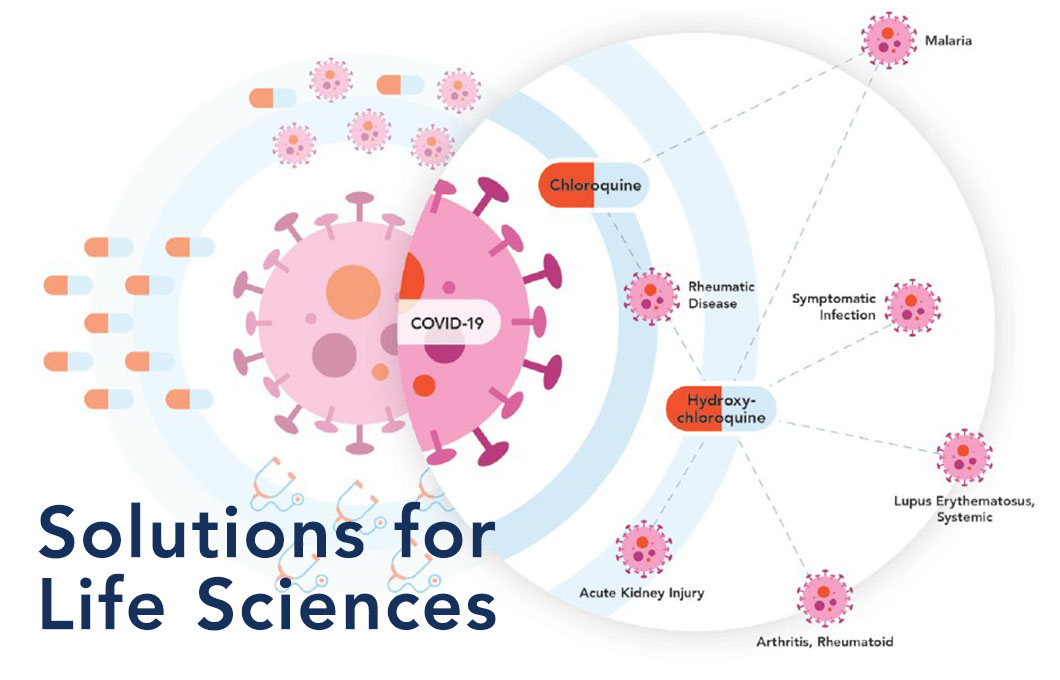

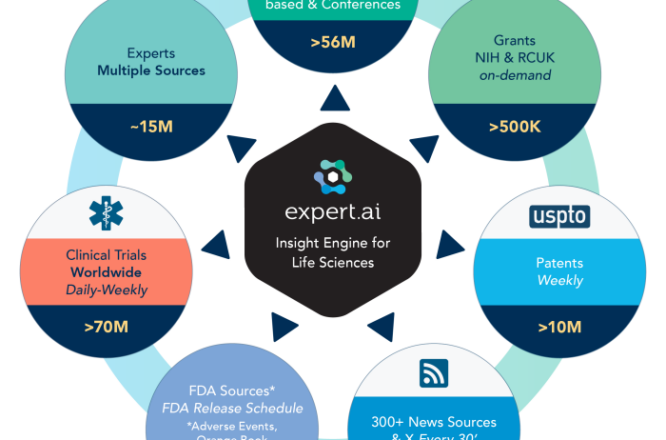

Learn Moreexpert.ai Insight Engine for Life Sciences

Make the most of your data to improve R&D, prevent operational risks and enhance the quality of your treatment and care.

Learn More